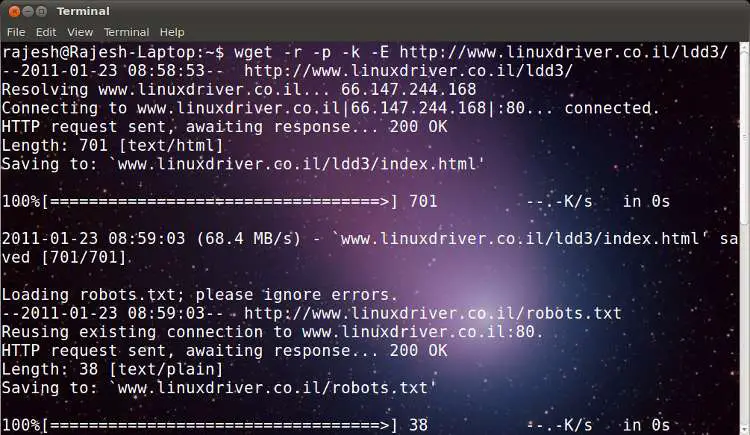

Here is a simple and effective way to get the files downloaded recursively from a website without actually visiting each and every link to the sub pages. This is also useful in case the pages are of type XHTML or text type—one can make them .html by use of an appropriate switch like -E. Go to the directory onto which you wish to download all the content from site, and use the following command:

…where:

-r is for recursive download of pages

-p is for linking pages locally so that users

can browse them easily once the download is

completed

-k is to create the directory structure, and…

-E is to create .html extensions to the type XHTML or text files.

Enjoy, and try out different contents on the Net. Do not forget to check out the manual pages for wget there’s always more information.

-r is for recursive download of pages

-p is for linking pages locally so that users

can browse them easily once the download is

completed

-k is to create the directory structure, and…

-E is to create .html extensions to the type XHTML or text files.

Enjoy, and try out different contents on the Net. Do not forget to check out the manual pages for wget there’s always more information.

1 comentarii:

As a farmer with livestock, having tractors for tasks like feeding and manure transport is crucial. I'm drawn to the john deere 4440, yet I'm also looking at the john deere 5020 for more robust field activities. The john deere 4640 appears to be ideal for tilling, and the kubota b7100 might be suitable for everyday tasks. Additionally, I'm contemplating whether the john deere 1010 would be a wise choice for enhanced versatility on the farm. Which tractor do you recommend?

Trimiteți un comentariu